New Problems Become Post-Quantum Solutions

Alessandro Pino (LINKS Foundation)

06/11/2023

A Cryptographically Relevant Quantum Computer (CRQC) can threaten most of the traditional public-key cryptography currently in use. Shor’s algorithm can break the RSA algorithm, which depends on the difficulty of factoring integers, and Diffie-Helman, which depends on the difficulty of the discrete logarithm problem (see preceding post Quantum Computers’ Role in Shor’s Algorithm).

If and when a CRQC occurs, this may obviously turn into a major issue for society: if we are no longer able to exchange messages, make payments, or sign transactions online in a safe manner, then much of our digital economy and society may collapse, or at the very least has to be drastically reorganized.

But there is a solution: Post-Quantum Cryptography (PQC) is the branch of cryptography that develops cryptographic methods resistant to attack by adversaries using quantum computers. New algebraic and number theory problems, difficult for quantum computers to solve, are being developed and studied by mathematicians and cryptographers as a foundation for asymmetric algorithms security. Most post-quantum algorithms now belong to six different families:

- Hash based cryptography, used to build digital signature schemes dependent on the resistance to collisions and preimages of the hash function.

- Code-based cryptography, based on error-correcting codes which are widely used in communications for resolving transmission issues.

- Multivariate cryptography, based on the hard mathematics problem of solving a system of multivariate polynomials.

- Isogeny Based Cryptography, a new field that emerged in the 2000s and has its foundations in Elliptic Curve Cryptography.

- Non-commutative cryptography, based on algebraic structures like semigroups, groups and rings which are non-commutative.

- Lattice-based cryptography, based on hard mathematics lattice problem and extensively investigated.

This blogpost focuses on the lattice-based cryptography, which is versatile in the types of cryptographic schemes it supports, and it is the most studied PQC today. Three of the National Institute of Standards and Technology (NIST) choices for post-quantum algorithms are based on lattices: CRISTALS-Kyber, CRISTALS-Dilithium and Falcon.

Focus: Lattice based problems

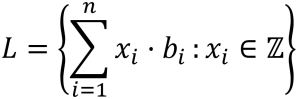

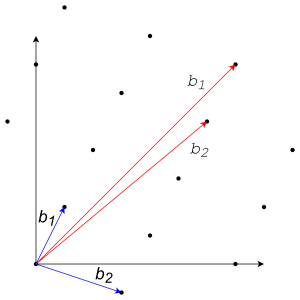

Lattices are geometric objects that can be intuitively described as a set of points in a n-dimensional space arranged periodically. More formally, a lattice can be defined as the set of all the integer linear combination of linearly independent basis vectors B = {b1, …, bn} ⊂ ℝn:

2-dimensional example of a lattice. The image gives an informal idea on the meaning of the “goodness” of a basis. In blue a “good” basis vector, in red a “bad” basis vector. |

The same lattice has many different bases. A problem on lattice is easy or hard based on the basis you are given.

In general, defining a lattice means defining its vector basis, because any point in a lattice can be reached by combining b1, …, bn. It is easy to find every point on a lattice if you are given “good” vector basis, but if you are given “bad” vector basis, it turns out it is hard to define every point belonging to a lattice. Informally, the goodness of a set of vector basis is leveraged by how much the vectors are perpendicular between them. To grasp this concept, simplifying as much as possible, you can think that spanning the lattice just adding and subtracting two almost parallel vectors is harder than using two almost perpendicular vectors.

Using the trick of the basis vectors, some problems were built on lattices, such as:

Shortest Vector Problem (SVP): Given a lattice L defined with its basis vectors of dimension n, find a nonzero vector v ∈ L that minimizes the Euclidean norm ||v ||.

That is, find the lattice point, which is closest to, but not equal to, the origin.

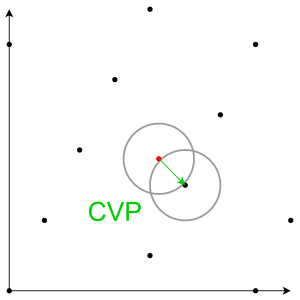

Closest Vector Problem (CVP): Given a lattice L defined with its basis vectors of dimension n, and a vector w ∉ L, find v ∈ L that minimizes the Euclidean norm ||w – v ||.

That is, find the lattice point which is closest to a particular point in space.

If these problems seem simple looking at the image, note that it was in two dimensions, but the lattice used to build cryptosystems are n-dimensional with n a noticeably large number!

These are the precursors of the problems on which is based the new post-quantum lattice cryptography, like the Short Integer Solution (SIS) or its dual problem and more used Learning With Errors (LWE).

On lattice cryptography, among others you can refer to [MR09].

Focus: Learning With Errors (LWE) problem

The LWE problem was introduced in 2005 by Oded Regev [Reg05], and it immediately has had a major influence on PQC. LWE is a technique for masking a secret value by adding noise (errors) to the correct equations that encode the secret.

The LWE problem asks to find a secret solution

![]()

given a series of ‘approximate’ random linear equations on s. For example,

3s1 + 2s2 ≈ 6 (mod 7)

s1 + 5s2 ≈ 5 (mod 7)

2s1 + s2 ≈ 4 (mod 7)

where each equation is correct up to some small additive error (say, ±1), and our goal is to recover s = (s1, s2). Finding s would be simple if the errors were absent; with just n equations, we can determine s in polynomial time by applying Gaussian elimination. The inaccuracy makes this problem more challenging.

What is the relationship with the lattices?

Every correct equation is encoded with a lattice point. Adding an error means moving a certain distance from the lattice point. Thus, finding the correct solution means finding the lattice point closest to the shifted point. But we stated before that finding the lattice point which is closest to a particular point in space (CVP), given a bad vector basis, is a hard problem!

This is just a glimpse of the problem. For more details, please refer to the expository notes [Reg10] or LWE lecture by Chris Peikert (see https://www.youtube.com/watch?v=K_fNK04yG4o).

A transition with so many uncertainties requires a hybrid approach

Experts in cryptography throughout the globe were invited by NIST to propose potential algorithms to the Post-Quantum Cryptography Standardization Project. The potential algorithms have been made available by NIST for analysing and, if possible, cracking by specialists. Several rounds of evaluation then whittled down the list of potential candidates until NIST made its selection in July 2022:

- CRYSTALS-Kyber, a key encapsulation method based on LWE.

- CRYSTALS-Dilithium, a digital signature based on LWE.

- SPHINCS+, a hash-based signature scheme.

- FALCON, a digital signature based on a problem called NTRU.

A second set of algorithms was later selected for a second study to complement the first set (https://csrc.nist.gov/Projects/pqc-dig-sig/round-1-additional-signatures), in addition to the four algorithms previously selected by NIST. To distinguish between the basic assumptions, NIST was interested in new general-purpose signature schemes that were not dependent on structured lattices. The need for backups was underlined when two algorithms that were initially candidates turned out to be vulnerable:

- Experts outside NIST cracked SIKE with a conventional computer [CD23].

- Breaking Rainbow takes a weekend on a laptop [Beu22].

The scale of the post-quantum transition is the most important thing to grasp. Post-quantum algorithms have higher computational, memory, storage, and communication needs than conventional algorithms (e.g., bigger key sizes, more sophisticated algorithms, …). In few situations, a simple replacement is feasible, while in other circumstances, a complete protocol redesign is required.

The transition to PQC is complex also due to the relative immaturity of several underlying mathematical assumptions. Because these assumptions have not received as much attention as more established ones, they may become vulnerable in the years to come. In addition, some solutions could not be migrated to PQC, resulting in incompatibility between traditional and post-quantum deployments.

The uncertainty surrounding the transition is also changing the terminology. For instance, NIST algorithms are now called “quantum-resistant” rather than “quantum proof” in recognition of the potential for a quantum computer to eventually be able to breach cryptography standards even more quickly.

These issues encourage hybrid approaches, in which one or more post-quantum algorithms are used in combination with a traditional algorithm. In this way, the scheme maintains the security of the post-quantum algorithm if the traditional is compromised by a CRQC, while remaining as safe as the traditional algorithm if the post-quantum assumption proves to be flawed in the future. A cryptographic scheme with more than one component algorithm where at least one component algorithm is a post-quantum algorithm and at least one is a traditional algorithm is formally denoted as Post-Quantum/Traditional (PQ/T) hybrid scheme [Dri23].